Run models through the API

Run models using the V7 API

Once you've trained a Model, you can keep your model running in V7 and deploy it via the API. In this guide, we'll run through how to test your model in V7, and use the API to put it into production.

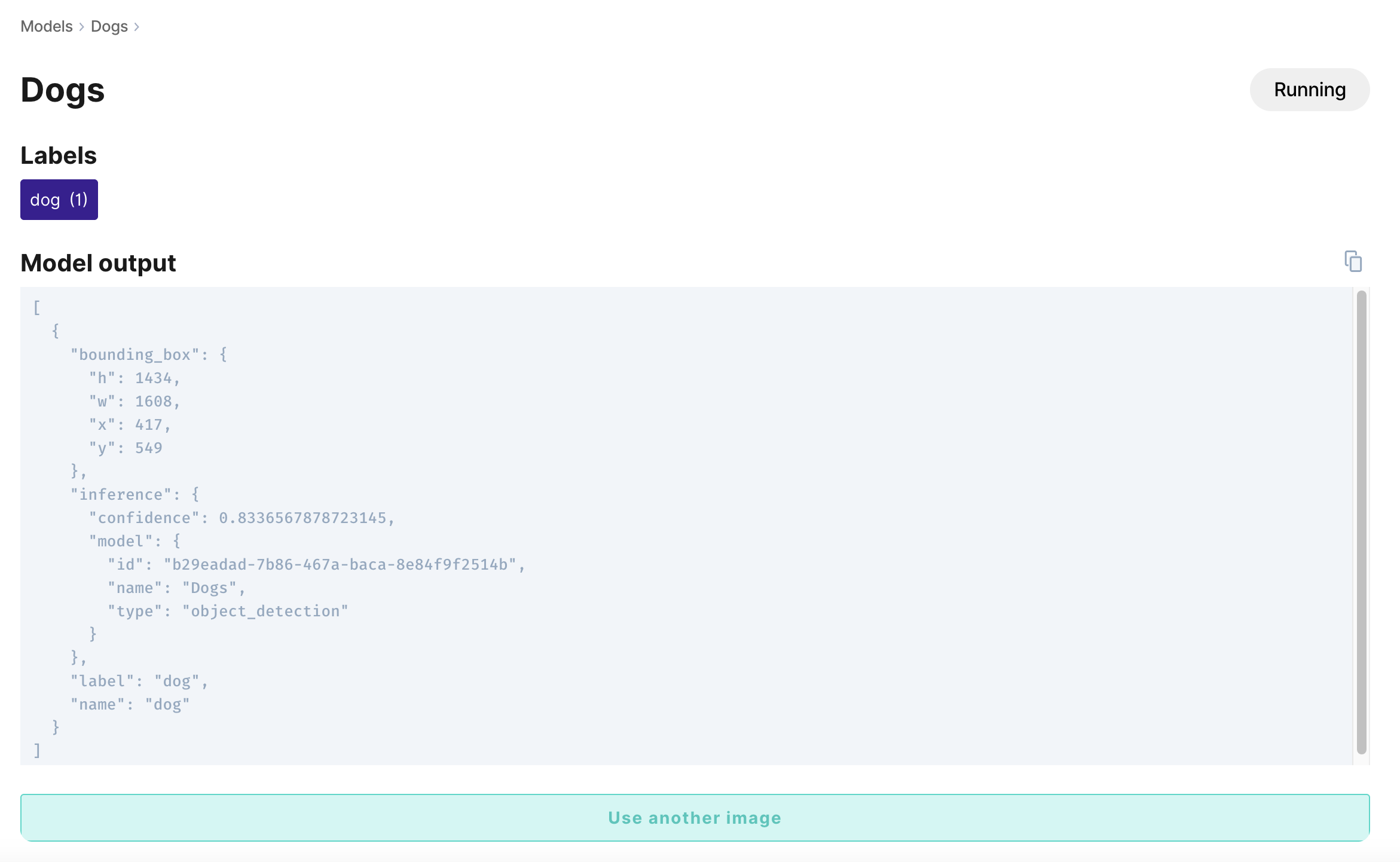

Test your model

Once your model has finished training, open the model to add an image to test the model in V7.

This will show you the output annotation example, which can be easily copied to your clipboard.

Deploy your model

If your model's ready to deploy, you can push it into production in two steps:

1. Generate an API key

Any keys that have been previously generated for your team under Settings > API Keys will appear under Add Existing Key at the bottom of the model summary page. **API keys are encrypted, so if you no longer have access to an existing key, you can generate a new API key from the model page by clicking New Key.**

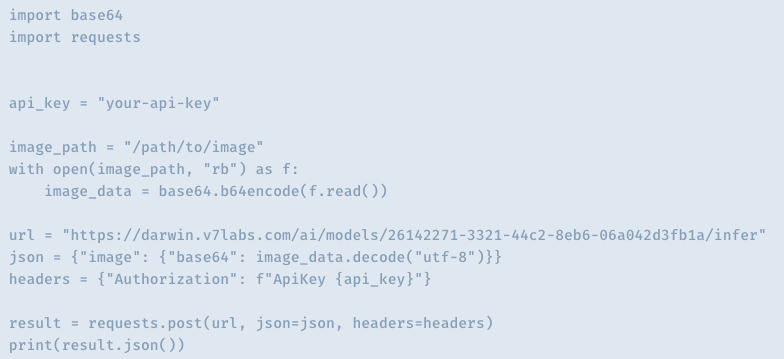

2. Paste your command from V7

The model summary page contains template commands for using your deployed model in Python, JavaScript, and Elixir, as well as through CLI commands and the Shell.

These commands were designed to be plugged into custom scripts to run your model. After copying the commands, paste your API key and image path into the fields provided.

Let's take a look a closer look at the Python commands, for example. The model summary page displays the following commands:

Here's the same command with a bit more detail. V7 will add the model's unique model_id to your URL automatically:

import base64

import requests

# What you need in order to run the script

api_key = "YOUR-API-KEY"

image_path = "/path/to/your/image"

model_id = "model-uuid"

# Encode your image in base64

with open(image_path, "rb") as f:

image_data = base64.b64encode(f.read())

# Prepare the request headers and payload

headers = {"Authorization": f"ApiKey {api_key}"}

json = {"image": {"base64": image_data.decode("utf-8")}}

# Send the inference request

url = f"https://darwin.v7labs.com/ai/models/{model_id}/infer"

result = requests.post(url, json=json, headers=headers)

# Print the response payload

print(result.json())These same steps can be used to run publicly available models. If you have the model ID for a public model on V7, simply plug in your API key, image path, and the model's ID to get a response in milliseconds.

Updated 8 months ago